By: The Trek News Desk

The Indian government has introduced stricter regulations for handling AI-generated and deepfake content online, mandating social media and digital platforms to remove any content flagged by competent authorities or courts within three hours.

On Tuesday, February 10, 2026, the Ministry of Electronics and Information Technology (MeitY) notified amendments to the Information Technology (Intermediary Guidelines and Digital Media Ethics Code) Rules, 2021. The revised rules are set to come into effect from February 20, 2026.

For the first time, the rules define AI-generated and synthetic content. This includes audio, video, or audio-visual material created or altered using AI that appears authentic. Routine edits, accessibility improvements, or legitimate educational or design work are excluded from this definition.

Under the new framework, AI-generated content is considered on par with all other digital information. This means that the same legal standards applied to other online content for determining unlawful activity will now extend to AI and synthetic material.

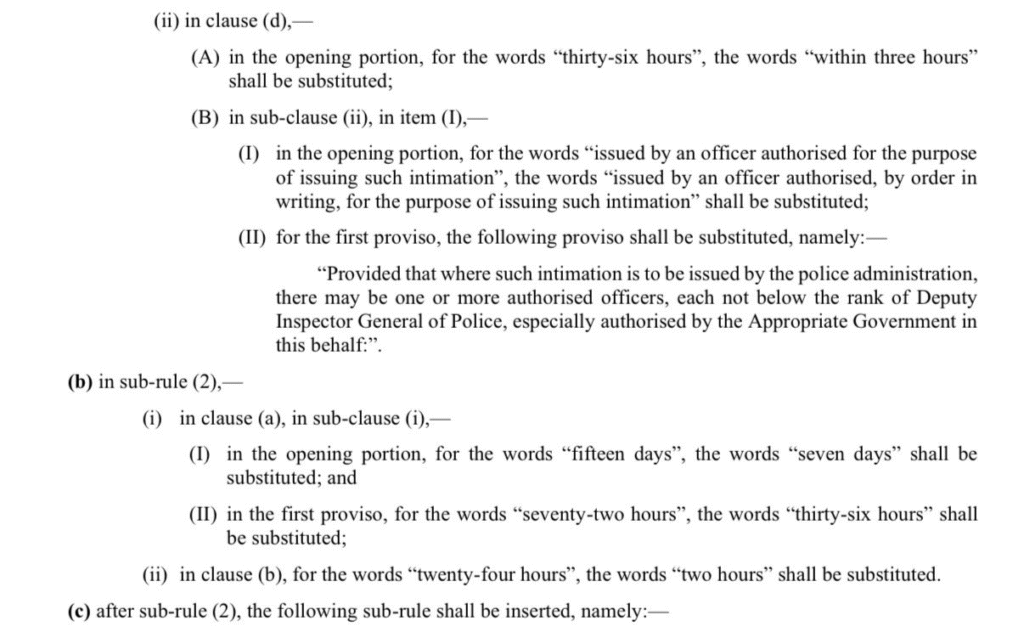

Platforms are required to comply with government or court orders within three hours, a significant reduction from the previous 36-hour window. User grievance redressal processes have also been accelerated under the new rules.

Platforms that allow the creation or sharing of AI or synthetic content must clearly label such content. Where technically feasible, the content must also include permanent metadata or identifiers. Once applied, these labels or identifiers cannot be removed or suppressed by intermediaries.

The government has directed platforms to deploy automated tools to prevent the dissemination of illegal, deceptive, non-consensual, or harmful AI content. This includes material related to forged documents, child sexual abuse, explosives, or impersonation.

Officials emphasised that the amendments aim to strengthen trust in digital platforms while curbing the misuse of AI technologies that could harm users.

Source: News Agencies